The Complete AI PM Interview Question Guide (2026)

AI PM interviews are no longer just traditional PM interviews with “AI sprinkled on top.”

At companies like OpenAI, Anthropic, Perplexity, Google DeepMind, and Meta AI, interviewers explicitly test AI-specific thinking across safety, model limitations, evaluation, and strategy.

The easiest way to understand this is:

AI PM interviews = Traditional PM frameworks + AI system thinking

To master them, you don’t need multiple frameworks.

You need to master below 6 core question types:

🧠 CORE AI PM INTERVIEW QUESTION TYPES

These 6 categories cover ~90% of AI PM interview questions.

1. AI Product Design

What interviewers test

Your ability to design AI-powered experiences, considering:

probabilistic outputs

human-in-the-loop

trust & explainability

model limitations

Example questions

Design an AI tutor for learning coding

Design an AI assistant for Uber drivers

Design AI features for Notion

Build an AI tool for customer support agents

What great answers include

AI capability mapping

fallback mechanisms

failure handling

user trust design

Framework

Use CIRCLES (adapted for AI)

Add two extra steps:

AI capability assessment

human override design

We’re doing deep-dive on each question type for AI PM Interviews.

SUBSCRIBE NOW to stay in the loop.

2. AI Product Improvement

What interviewers test

Your ability to diagnose AI product issues and improve them.

Because AI products behave differently than deterministic software.

Example questions

AI coding assistant suggestions are inaccurate. Improve it.

Users don’t trust AI outputs. What would you change?

Skills tested

root cause analysis

prompt/data/system fixes

iteration loops

Framework

Use:

PQ-GUP-SEMS (our existing framework)

Add AI diagnostics:

training data

prompt layer

retrieval layer

evaluation pipeline

3. AI Product Strategy

What interviewers test?

Your ability to make strategic decisions in the AI ecosystem.

Example questions

Should Spotify build its own LLM?

Should a startup rely on OpenAI APIs or build models?

What is OpenAI’s moat?

Skills tested

AI economics thinking

build vs buy decisions

data moats

distribution advantage

Frameworks

BUS (Business → Users → Strategy)

SUBSCRIBE NOW to get full access, schedule 1:1 mock interviews and exclusive PM career community.

4. AI Safety & Responsible AI

What interviewers test:

Your ability to prevent harm and misuse of AI systems.

This is a major focus for companies like Anthropic and OpenAI.

Example questions:

How do you approach GenAI safety in consumer products?

How would you prevent harmful outputs from a chatbot?

How would you detect bias in AI systems?

How would you red-team an AI feature?

Risks you must consider:

Common AI risks include:

Framework:

P-PRIME

P - Product Context

P - Potential Risks

R - Risk Mitigation

I - Implementation Guardrails

M - Monitoring & Metrics

E - Evolution & Iteration

5. AI Evaluation & Metrics

This is a huge category in AI PM interviews.

What interviewers test:

Your ability to measure AI systems correctly.

Your understanding that AI products require different metrics than traditional software.

AI metrics are different because:

outputs are probabilistic

quality is subjective

accuracy ≠ user satisfaction

Example questions:

How would you measure success of ChatGPT?

What metrics should an AI writing assistant track?

How would you evaluate hallucination rate?

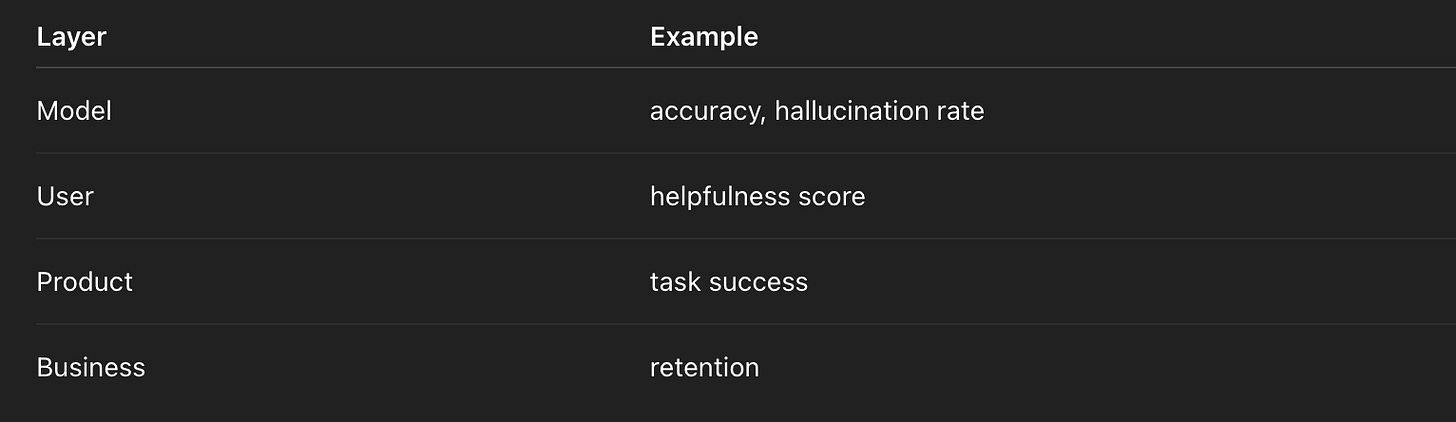

Metrics categories:

AI interviews often probe trade-offs between accuracy vs satisfaction.

Framework

EVAL-AI

6. AI Technical Judgment (Architectural Decisions)

This category is increasingly common.

You are not expected to code, but you must understand AI architecture.

Example questions:

When should you use RAG vs fine-tuning?

When should you use embeddings?

When should you build an AI agent vs workflow?

Design an Agentic AI System That Autonomously Adapts to New Tasks.

Skills tested:

ML system understanding

engineering collaboration

feasibility assessment

Framework:

STACK-AI

🚀 ADVANCED MODULES

These are important in real-world PM work, but appear less frequently in interviews, especially for junior roles.

7. AI Product Operations

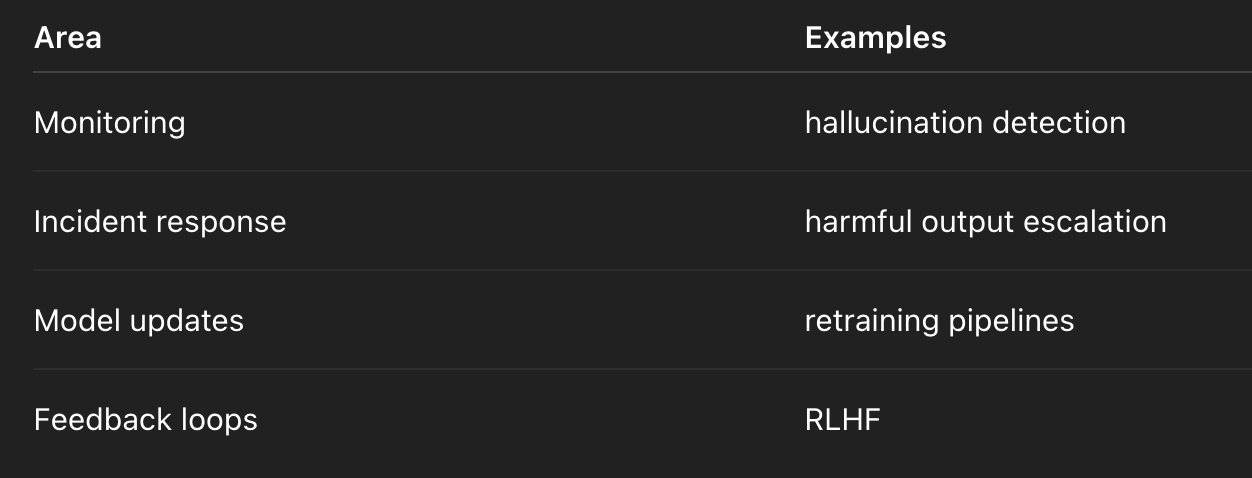

This category tests whether you can run AI systems in production.

AI products require constant monitoring.

Example questions:

How would you monitor model drift?

What should an AI incident response look like?

How do you handle harmful outputs after launch?

Operational areas:

Where it shows up:

embedded in metrics questions

embedded in safety questions

senior PM interviews

8. AI Platform & Ecosystem Strategy

This category appears frequently in senior AI PM interviews.

Example questions:

Should OpenAI create an app ecosystem?

How should Anthropic monetize Claude APIs?

Should Google open-source its models?

Skills tested:

platform effects

developer ecosystem strategy

pricing models