How to Answer AI Product Strategy Questions | AI PM Interview Guide (2026)

Step by step guide on how to answer AI Product Strategy Questions asked in AI PM interviews at AI-First companies.

Picture this. You are in a final-round interview at a Series B AI startup. The interviewer asks:

→ “Should Spotify build its own LLM?”

You have practiced product sense. You know your metrics frameworks. But this feels different - there is a technical undercurrent, and a sense that the wrong answer will expose you.

So you do what most candidates do. You say something like:

“Spotify has a lot of music data, so they should probably build their own model to create a data moat.”

The interviewer nods politely. But you have just failed the test, and you did not even know it.

Here is the dirty secret: most candidates fail AI strategy questions not because they lack PM instincts, but because they treat them like generic strategy questions with an AI label slapped on top. They say “data moat” reflexively, without explaining why any particular data is defensible. They recommend building from scratch because it sounds bold.

That is not what interviewers are testing.

They want to know:

Can you reason about inference costs?

Evaluate API dependency risk?

Make a phased recommendation instead of a binary yes-or-no?

This article gives you a complete, repeatable system for any AI product strategy question.

Table of Contents

Find the 6 essential question types in AI PM interview here.

Previous Article: How to answer AI Product Improvement Questions?

What Makes AI Product Strategy Questions Different?

Before diving into the framework, it is worth being precise about what kind of question we are talking about.

AI product strategy questions are not product sense questions. They are not metrics questions. They are not even general strategy questions. They occupy a distinct category, and recognizing that category is the first step toward answering well.

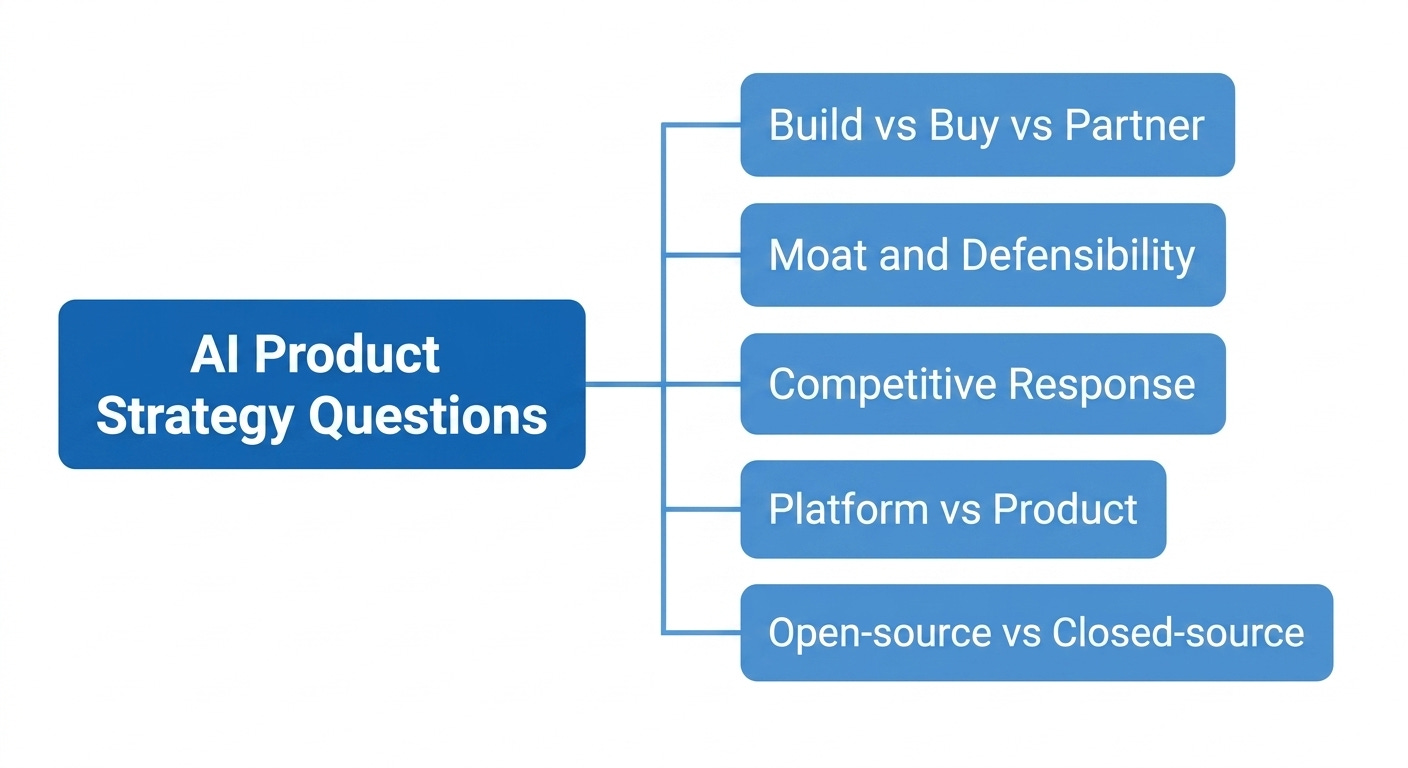

Here is a quick taxonomy of AI strategy question types you will encounter:

Build vs. Buy vs. Partner: Should [Company X] build its own model, use an API, or partner with an AI provider? (Spotify + LLM, GitHub + Copilot, Salesforce + Einstein AI)

Moat and Defensibility: What is OpenAI’s moat? How does Perplexity differentiate against Google? What stops a competitor from replicating this AI product in 6 months?

Competitive Response: How should [Incumbent] respond to an AI-native competitor eating its lunch? (Duolingo vs. AI tutors, Adobe vs. Midjourney, Bloomberg vs. Perplexity)

Platform vs. Product: Should [Company X] remain an AI-powered product or build an AI platform? (Hugging Face, Cohere, Anthropic’s API strategy)

Open-source vs. Closed-source: Should Google open-source its models? What does Meta gain from making Llama open-source?

Each of these has surface-level similarities to a general strategy question. But they all require you to reason through a layer of AI-specific complexity that a standard business strategy framework simply does not address. That is the gap this article fills.

Three layers interviewers are simultaneously testing:

Business judgment: Can you identify what actually matters for this company’s competitive position, revenue model, and constraints?

AI-specific trade-off thinking: Do you understand the real economics of AI - training costs, inference at scale, model commoditization, open-source parity?

Intellectual honesty about uncertainty: AI markets move fast. Interviewers respect candidates who acknowledge what they do not know, rather than confidently stating things that were true 18 months ago and are no longer true today.

The hidden test underneath all of this: Are you pattern-matching on AI buzzwords, or do you actually understand why certain AI strategies work in certain contexts and fail in others?

What Interviewers Are Actually Testing?

When an interviewer asks an AI product strategy question, here is what they are listening for:

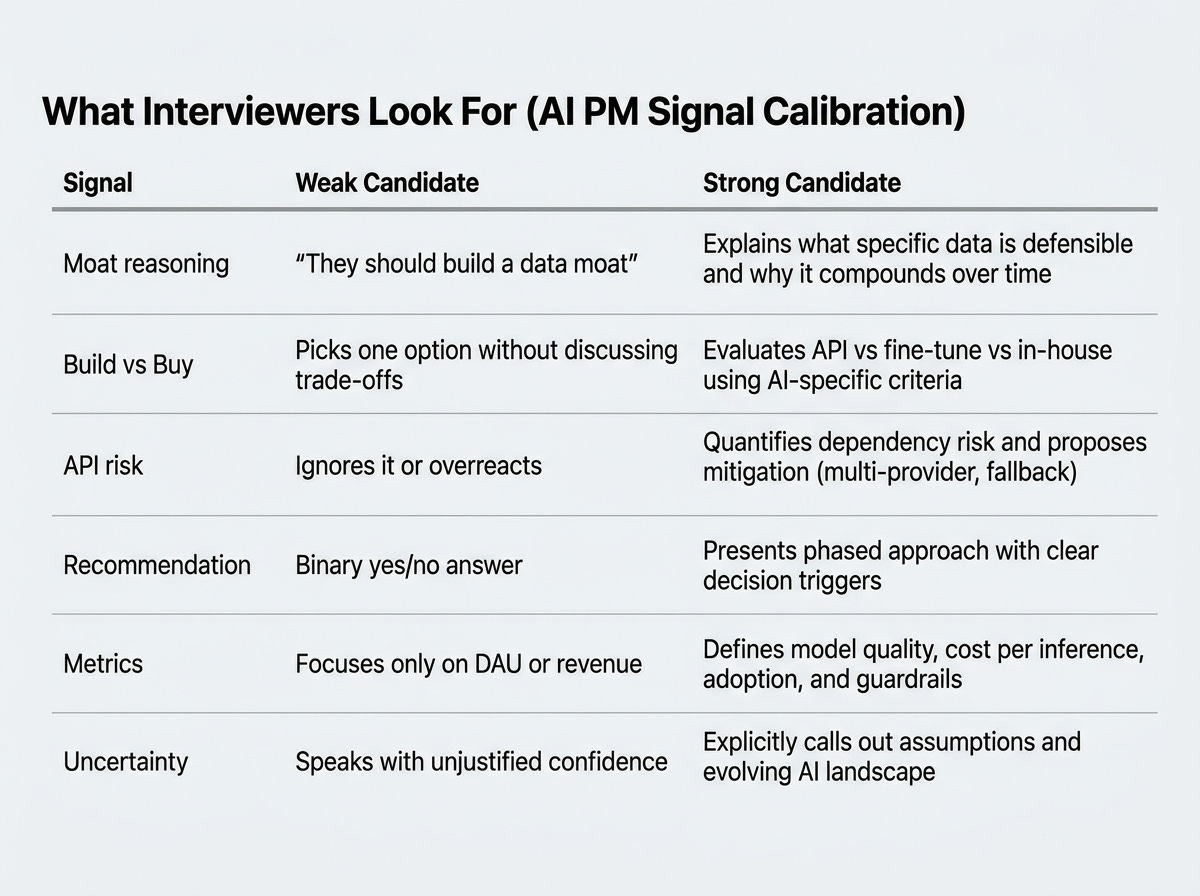

1. Can you reason about AI moats, or do you just name-drop them?

Saying "Spotify should build a data moat" is not an insight. An insight is: "Spotify has 600 million users generating implicit listening behavior - skip rates, replay rates, playlist curation patterns - that no music label or API provider can access. That behavioral signal, combined with a fine-tuned model, could produce recommendation quality that is genuinely hard to replicate. But only if Spotify actually trains on it, which requires capabilities they do not have today." That is what moat reasoning looks like.

2. Do you understand when building your own model makes sense, and when it does not?

Most companies should not build their own foundation model. The cost is prohibitive, the talent market is hyper-competitive, and open-source models are rapidly closing the quality gap. A strong candidate knows this. A weak candidate recommends building from scratch to sound impressive.

3. Can you evaluate API dependency risk without overcorrecting into fear-mongering?

APIs are fast, cheap, and increasingly capable. Dismissing them as “too risky” is just as wrong as ignoring the risk entirely. The right answer acknowledges the risk, quantifies it where possible, and builds a hedge strategy.

4. Do you balance speed-to-market with long-term differentiation?

AI strategy questions almost always have a time dimension. The best answer today might be wrong in 24 months. Interviewers want to see you think in phases, not make a single static recommendation.

5. Do you know the AI-specific metrics that matter?

“Measure success by DAU and revenue” is incomplete for an AI product. Interviewers want to hear about cost per inference, model quality metrics (win rate, human eval scores), latency thresholds, and fine-tuning ROI.

Here is a quick reference for what separates strong from weak answers:

How to Answer AI Product Strategy Questions?

You may already know the BUS framework (Business Objectives, User Needs, Solutions). It is a solid general-purpose structure. But applied to AI strategy questions without modification, it will produce generic answers.

Here is how to adapt each step of BUS for AI.

Step 1: B - Business Objectives (AI Lens)

In a traditional product strategy question, you would ask:

What is the company’s mission?

What are its revenue streams?

What does it want to achieve?

In an AI strategy question, you need to go further. Ask:

1. Stage of AI maturity:

Is this company AI-native (AI is the core product),

AI-first (AI is central to the roadmap),

or AI-augmented (AI is a feature among many)?

Spotify is AI-augmented. OpenAI is AI-native. Each has very different strategic options.

2. Existing data assets:

What proprietary data does this company sit on?

Is it labeled, structured, and actually usable for training?

“We have a lot of data” is not an asset. “We have 5 years of user behavioral logs with ground-truth labels that no one else can replicate” is an asset.

3. Technical capability:

Does this company have ML infrastructure?

Do they have a team that can fine-tune models, maintain them, monitor for drift?

If not, building is not just expensive - it is operationally impossible without a multi-year hiring ramp.

4. Competitive pressure from AI-native players:

Is this company being disrupted by an AI-native competitor right now?

If yes, speed-to-market probably outweighs long-term moat building.

If no, there is time to build the right way.

5. Revenue model and AI fit:

Is AI a cost center or a revenue driver here?

Companies that can charge for AI-powered outcomes (GitHub Copilot’s subscription, Grammarly Premium) have different build-buy calculus than companies where AI is pure infrastructure.

💡 Interview Tip:

Do not rush past this step.

Interviewers are evaluating whether you can size up a company’s AI position quickly and accurately. A sharp Business Objectives section signals that everything you recommend next is grounded in reality, not generic advice.

How step-1 looks like for our Spotify example?

Spotify is AI-augmented, not AI-native. Its gross margins are structurally constrained by music licensing (roughly 70% of revenue goes to rights holders), which means AI inference costs hit harder than they would at a SaaS company.

It has no ML research team capable of training a foundation model today. Its strongest AI asset is distribution: 600 million users generating rich behavioral data. This context shapes every strategic option that follows.

Step 2: U - User and Customer Needs (AI Lens)

In traditional product strategy question, user needs are about pain points and jobs-to-be-done.

In AI strategy, you need to add a layer of AI-specific user behavior.

1. Who benefits from this AI strategy decision?

There are often multiple stakeholders: end users consuming the AI product, developers building on top of it, enterprise buyers evaluating it for procurement, and internal teams who will maintain it. Be explicit about which user group you are optimizing for.

2. What AI capabilities do they actually need?

Not all users need the most powerful model. Many users need fast, cheap, and good enough. A writing assistant for a B2B SaaS company does not need GPT-4-level reasoning - it needs low latency, consistent output format, and enterprise data residency. Overpowering the solution is a real mistake.

3. Trust, reliability, and explainability?

Especially for enterprise and regulated industries, users need to understand why the AI made a recommendation. A healthcare PM recommending a black-box model without addressing explainability will lose the enterprise deal. Mention this where relevant.

4. Willingness to pay for AI-powered outcomes?

This is often the hinge question. Will users pay a meaningful premium for AI that is 20% better than the baseline? If yes, it justifies the cost of building. If no, an API approach and the margin compression that comes with it is perfectly acceptable.

💡 Interview Tip:

Candidates often skip the user lens in AI strategy questions because the question feels more macro and business-focused.

Do not skip it.

The user lens is where you demonstrate empathy for real humans interacting with AI systems, and it often surfaces constraints that change your recommendation entirely.

How step-2 looks like for our Spotify example?

End listeners do not care whether the AI powering their Discover Weekly runs on Spotify's own model or OpenAI's API - they care about relevance and speed.

The most promising segment is casual listeners who want contextual, mood-aware recommendations without switching apps. Neither group needs the most powerful frontier model - they need low latency and high relevance.

This immediately rules out building a frontier LLM from scratch, and points toward fine-tuning on Spotify's behavioral data instead.

Step 3: S - Solutions and Strategy (AI Lens)

This is where most of your interview time will be spent. The S step in an AI strategy question has five sub-components:

1. Generate four strategic options - always.

For any AI strategy question, you should generate at minimum four options:

Build: Train or fine-tune your own model from scratch or from an open-source base

Buy (API): Use a third-party model provider (OpenAI, Anthropic, Google, Cohere)

Partner: Co-develop with an AI provider, sharing IP and go-to-market

Hybrid: Use API now, migrate to a fine-tuned or proprietary model over 12-24 months

The hybrid option is almost always worth articulating, because it is the most realistic and pragmatic path for the majority of companies.

2. Evaluate options against AI-specific criteria.

Do not just list pros and cons. Evaluate options systematically against criteria that matter in the AI context:

Data moat potential

Time to market

Cost and margin impact

Differentiation (can competitors replicate this easily?)

API dependency risk

Regulatory and compliance fit

Internal capability requirements

3. Make a phased recommendation.

Do not give a single binary answer. Give a recommendation that evolves over time. “In the next 6 months, do X. Over 12-24 months, reassess based on Y triggers. Beyond 24 months, consider Z if conditions are met.”

4. Define AI-specific success metrics.

Beyond DAU and revenue, specify:

Cost per inference (and target trajectory)

Model quality metric relevant to the use case (accuracy, win rate, NPS on AI features)

Latency benchmarks

Fine-tuning ROI if applicable

API dependency ratio (what % of core product relies on a single external provider)

5. Address risks with specific mitigations.

Every AI strategy has risks. Name them, and for each one, offer a concrete mitigation. Vague risk acknowledgment (”there are some risks”) is worse than no risk section at all.

How step-3 looks like for our Spotify example?

The four options for Spotify map directly to this structure:

build a proprietary LLM (❌ too costly, wrong capability stage),

use APIs only (🟡 fast but margin-compressing at scale),

fine-tune an open-source model (✅ best medium-term fit),

or go hybrid (✅ recommended - ship on APIs now, migrate to fine-tuned model over 18 months).

The full Spotify example walkthrough of how these options score against each other is in the example section below.

The 5 AI Moats - Your Analytical Backbone

Before we walk through a full example, you need to have a strong grasp of the five sources of AI moat.

In almost every AI strategy question, the interviewer wants to know: what will prevent a competitor from replicating this? Your answer should come from the below list.

Keep reading with a 7-day free trial

Subscribe to Crack PM Interview to keep reading this post and get 7 days of free access to the full post archives.