ChatGPT Hallucinations Increased This Quarter. How Would You Improve It? | Open AI Interview

A complete PM interview walkthrough on reducing ChatGPT hallucinations from diagnosing the root cause across the AI stack to suggesting solutions with phased plan.

Picture this: You are interviewing for a PM role at a leading AI company.

The interviewer leans forward and asks:

→ “ChatGPT’s hallucination rate has increased this quarter. How would you improve it?”

Your first instinct might be to suggest adding a disclaimer, building a fact-check feature, or re-training the model on better data.

But here is the catch:

Hallucinations are a symptom, not a root cause. Jumping to solutions without diagnosing which layer of the AI system is broken is the single most common mistake candidates make on this question. And it is the mistake that costs them the offer.

This type of question is now a standard at AI-first companies - OpenAI, Anthropic, Google DeepMind, Meta AI, and Microsoft.

It is asked not just for AI PM roles but for any PM role on a team that ships AI-powered features.

Interviewers use it to separate candidates who think in “systems” from candidates who think in “features”.

Strong candidates do not reach for the UX layer first.

They treat AI Product Improvement questions as a forensic investigation.

They diagnose across six layers of the AI stack, identify where the failure originates, and then propose targeted solutions - not a wishlist of product ideas.

This answer breakdown walks you through exactly how to do that.

Bonus: Infographic Cheatsheet to answer “ChatGPT Hallucination Increased. Improve It.”

Let’s dive in and answer this question

How to Answer AI Hallucinations Improvement Question?

I will use the PQ-GUP-SEMS framework - the structured approach covered in detail in the How to Answer AI Product Improvement Questions guide - and apply it step by step to this specific question.

Here is the framework at a glance:

Step 0 - Keywords: Anchor to what the question is actually asking.

Step 1 - Product (P): Define the product, users, and value proposition.

Step 2 - Questions (Q): Ask clarifying questions to scope the problem.

Step 3 - Goal (G): Define what you are trying to achieve.

Step 4 - Users (U): Segment users and choose a primary focus.

Step 5 - Pain Points (P): Identify and prioritize what is going wrong.

Step 5.1 - Root Cause Analysis: Extra step added for AI Product Improvement. Diagnose why the P1 pain points are happening across the AI stack.

Step 6 - Solutions (S): Generate solutions across system layers.

Step 7 - Evaluate (E): Prioritize solutions with trade-offs.

Step 8 - Metrics (M): Define AI-specific success metrics.

Step 9 - Summary (S): Deliver a crisp, executive-ready recommendation.

Use the mnemonic PQ-GUP-SEMS to remember the sequence.

Now, let’s break down each step.

Step 0: Listen for Keywords

The question is:

→ “ChatGPT hallucinations have increased this quarter. How would you improve it?”

Before anything else, break the question into its components and understand what each word is telling you.

1) “Hallucinations increased” - This is a regression signal, not a baseline complaint. The word “increased” tells you this is not a product that has always struggled with accuracy. Something changed. You are not being asked to build a better product from scratch. You are being asked to diagnose a degradation.

2) “This quarter” - A specific timeframe is one of the most valuable signals in any product problem. It means there is almost certainly a proximate cause - a model update, a fine-tuning run, a prompt change, an infrastructure change, or a shift in the user population. Treat this like a detective would treat “the crime happened on Thursday night.” It narrows your search significantly.

3) “How would you improve it” - The word “improve” means you need both diagnosis and solutions. You are not just being asked to explain what went wrong. You are being asked to fix it.

What is NOT mentioned:

No specific user segment is named

No metric is cited (is this user-reported? internally measured? third-party audited?)

No root cause is given

No timeframe for the fix

No budget or resource constraints

All of this is information you need to gather before going further.

💡 Interview Tip:

Write the question down exactly as stated. Anchor to the regression framing. This is a quality degradation problem, not a feature gap. The mental model that drives your answer should be forensic investigation, not product brainstorming.

SUBSCRIBE to get full access to in-depth breakdown of AI PM interview questions and schedule 1:1 mock interviews.

Step 1: Describe the Product

Before diagnosing the problem, demonstrate that you understand what ChatGPT actually is and what makes hallucinations particularly damaging to its core value proposition.

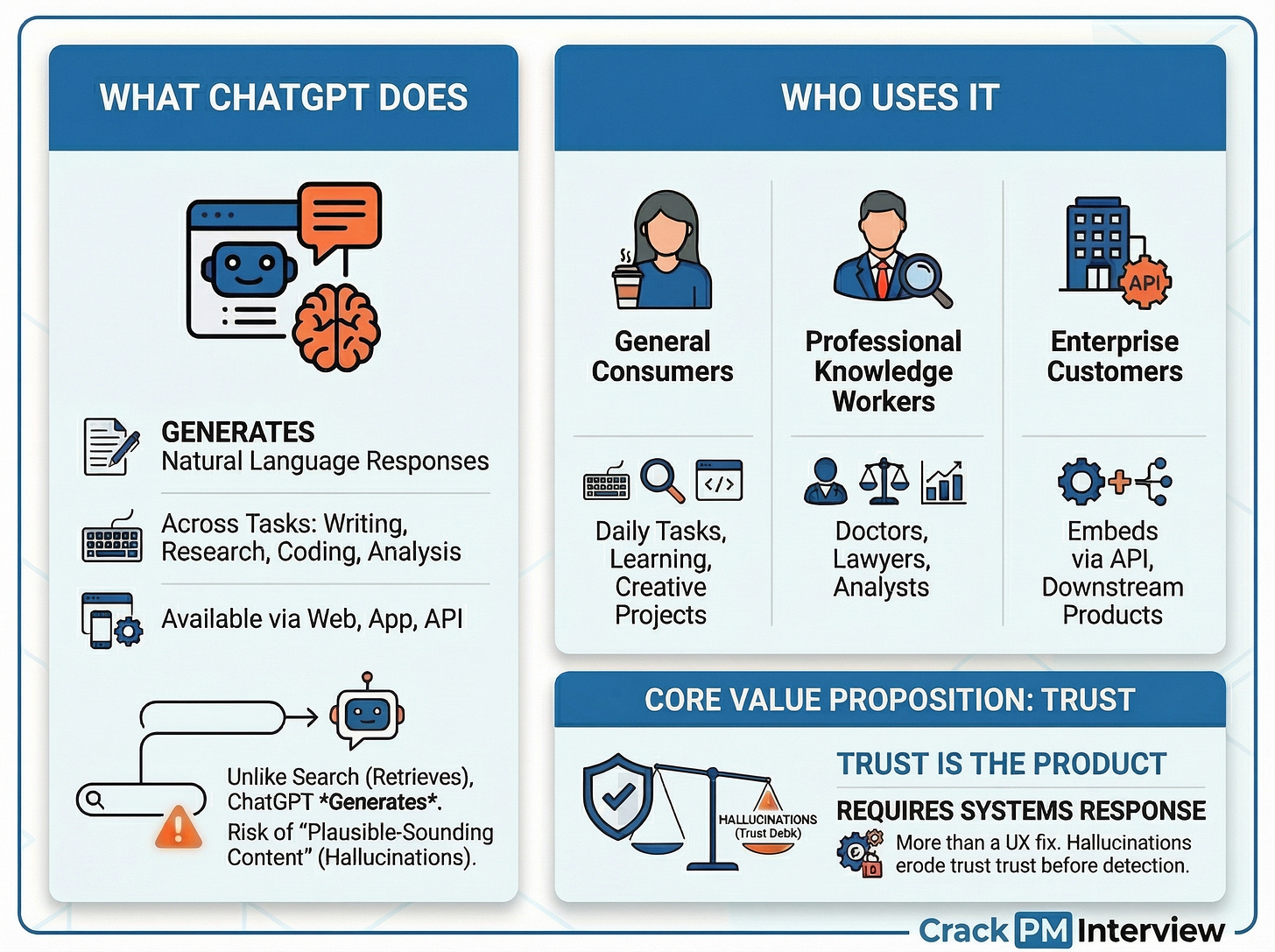

What ChatGPT Does?

ChatGPT is an AI-powered conversational assistant that generates natural language responses to user queries across writing, research, coding, analysis, summarization, and general conversation. It is available via a consumer web and mobile app, an API for developers and enterprises, and integrated enterprise products.

Unlike a search engine that retrieves and surfaces existing documents, ChatGPT generates responses.

This distinction matters enormously for the hallucination problem - retrieval systems can be wrong when the source is wrong.

But, generative systems can be wrong when the source is right, because they produce text that was never written anywhere.

Who Uses It?

ChatGPT serves three meaningfully different user groups:

General consumers who use it for daily tasks - writing assistance, learning, casual Q&A, trip planning, and creative projects. Their accuracy requirements are moderate and their tolerance for errors is relatively high.

Professional knowledge workers - doctors, lawyers, financial analysts, researchers, and consultants - who use it for domain-specific research, drafting, summarization, and analysis. Their accuracy requirements are high and their tolerance for errors is very low. A wrong answer about a drug interaction, a legal precedent, or a financial regulation is not just unhelpful - it is potentially harmful.

Enterprise customers who access ChatGPT via API and embed its outputs directly into their own customer-facing products. For this group, hallucinations are not just a user experience problem. They cascade downstream into their products and their users. A single hallucination in an AI-powered medical information tool can affect thousands of end users.

The Core Value Proposition

ChatGPT’s core promise is reliable, contextually relevant, natural language responses at scale. But there is a deeper version of this promise that matters for this problem: trust.

Trust is not a feature of ChatGPT. Trust IS the product. A user who does not trust the outputs has no reason to use it over a search engine, a textbook, or a human expert. Every hallucination is a direct debit from the trust account - and unlike a slow page load or a clunky UI, a hallucination that a user does not catch can cause real harm before the trust damage is even registered.

This is why the hallucination problem demands more than a UX fix. It demands a systems response.

💡 Interview Tip:

Ask the interviewer after describing your understanding of the product:

“Does this align with your understanding of ChatGPT’s value proposition and user base?”

Step 2: Ask Clarifying Questions

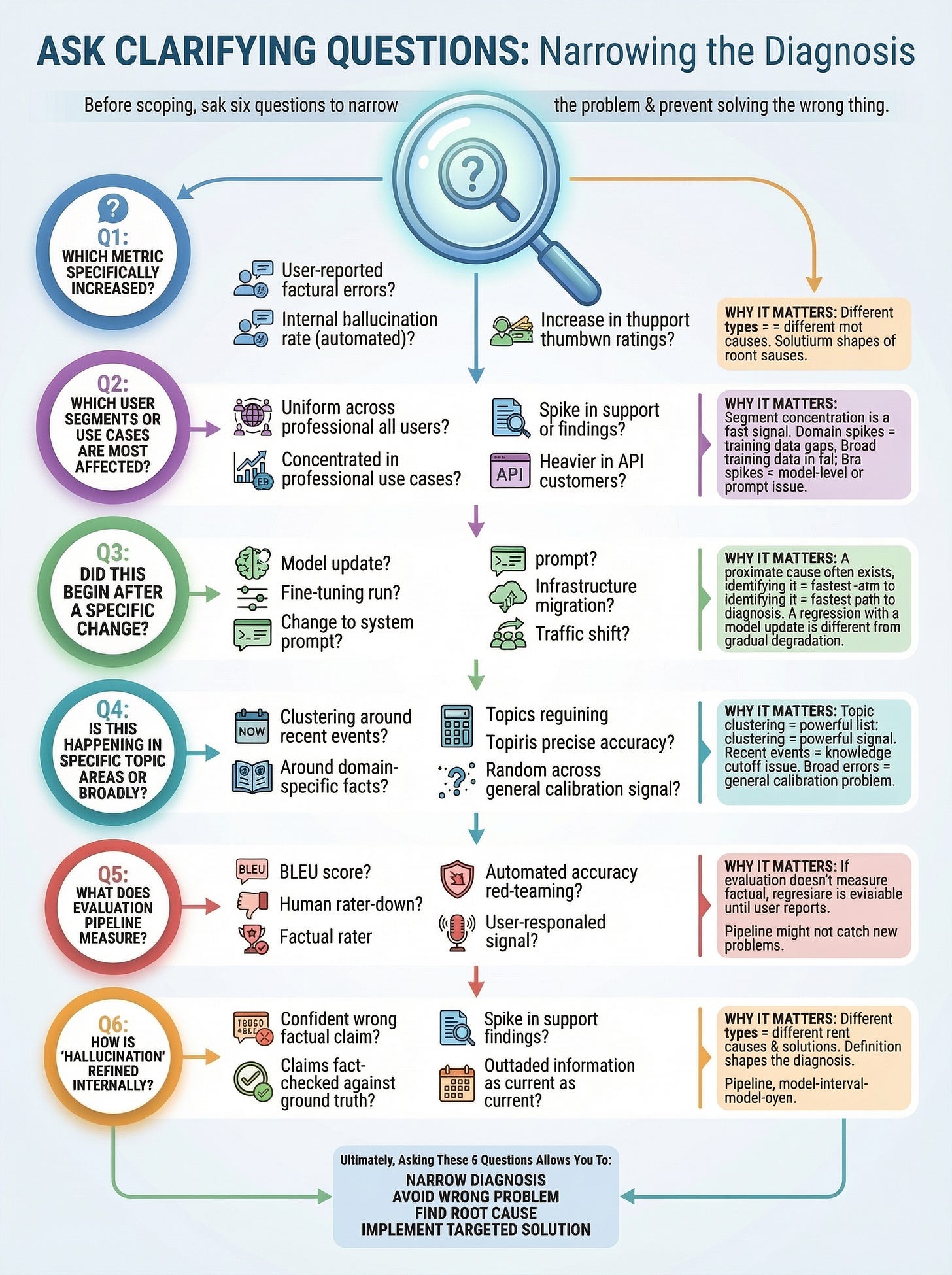

Before scoping the problem, ask six clarifying questions. Each one is designed to narrow the diagnosis and prevent you from solving the wrong problem.

Q1: Which metric specifically increased?

Is this user-reported factual errors?

An internal hallucination rate measured by automated evaluation?

Third-party audit findings?

A spike in support tickets about inaccurate outputs?

An increase in thumbs-down ratings on specific response types?

Why this matters: Different metrics point to different root causes. A spike in user-reported errors in a specific domain suggests a data or retrieval gap. A broad increase in an internal automated hallucination rate suggests a model or prompt layer issue. An increase in thumbs-down without a corresponding increase in explicit error reports may mean the problem is in confidence calibration - the model is not more wrong, it is more assertively wrong.

Q2: Which user segments or use cases are most affected?

Is this happening uniformly across all users?

Or, is it concentrated in professional use cases - medical, legal, finance, technical?

Is it showing up more heavily in API customers?

Why this matters: Domain-specific spikes strongly suggest training data gaps or retrieval failures in those domains. Cross-the-board spikes suggest a model-level or prompt-level issue that affects all query types equally. Segment concentration is one of the fastest diagnostic signals available.

Q3: Did this begin after a specific change?

Was there a model update?

A fine-tuning run?

A change to the system prompt?

An infrastructure migration?

A traffic shift from a new market or user type?

Why this matters: “This quarter” is telling you there is almost certainly a proximate cause. Identifying it is the fastest path to both diagnosis and fix. A regression that began the week of a model update is a very different problem from a gradual degradation over three months.

Q4: Is this happening in specific topic areas or broadly?

Are errors clustering around recent events?

Around domain-specific facts in particular fields?

Around topics that require precise numerical accuracy?

Or, is it random across query types?

Why this matters: Topic clustering is a powerful diagnostic signal. Errors concentrated in recent events point to knowledge cutoff issues or retrieval failures. Errors in specific professional domains point to training data gaps. Broad errors across random topics point to a general model calibration problem.

Q5: What does the current evaluation pipeline measure?

Are we using BLEU score?

Human rater thumbs-down?

Factual accuracy benchmarks?

Automated red-teaming? Domain-specific accuracy tests?

Or, primarily user-reported signal?

Why this matters: If the evaluation pipeline does not directly measure factual accuracy, regressions in hallucination rate can be invisible until users report them. Many hallucination increases are not new problems - they are problems that were always there but are now being measured better. Or, more dangerously, they are new problems that the evaluation pipeline was not designed to catch.

Q6: How is “hallucination” defined internally?

Is it any confident wrong factual claim?

Only claims that can be fact-checked against a ground truth?

Fabricated citations?

Outdated information stated as current?

Plausible-sounding but invented domain knowledge?

Why this matters: Different hallucination types have different root causes and require different solutions. Conflating them produces vague answers. Confabulation (inventing plausible-sounding facts) is a model-layer problem. Outdated information stated as current is a data freshness or retrieval problem. Fabricated citations are a different failure mode from factually incorrect statements. The definition shapes the diagnosis.

Assumed Interviewer Response:

“Good questions. Here is what we know:

The metric is user-reported factual errors, up 18% quarter-over-quarter

Most affected: professional users asking domain-specific questions in medical, legal, and finance

Trigger: a fine-tuning update was deployed six weeks ago

Topic pattern: concentrated in recent events and domain-specific facts

Evaluation pipeline: currently tracks BLEU score and thumbs-down ratings; no factual accuracy benchmark exists

Internal definition: outputs that assert false facts confidently with no uncertainty signal”

We now have clear parameters to work with.

SUBSCRIBE to get full access to in-depth breakdown of AI PM interview questions and schedule 1:1 mock interviews.

Step 3: Define the Goal

With the scope established, define the goal precisely before moving forward.

Primary Goal

Reduce user-reported factual error rate by 30% within two quarters, starting with professional user segments, without degrading overall response quality or materially increasing latency.

Why This Matters Strategically

The 30% target is not arbitrary. An 18% quarter-over-quarter increase in reported errors is a trust-erosion signal that, left unaddressed, compounds. Professional users who lose trust rarely return - they are the segment most likely to evaluate alternatives systematically and least likely to forgive repeated errors. Here is what is at stake across the business:

Trust capital: Trust, once broken with professional users, is extremely difficult to rebuild. The cost of recovery is almost always higher than the cost of prevention.

Liability exposure: Hallucinations in medical, legal, and financial contexts carry real-world risk. A wrong answer about medication dosage or a mischaracterized legal precedent is not a UX problem - it is a product liability problem.

Enterprise cascade: API customers embed ChatGPT outputs into their own products. A hallucination in the model multiplies into errors across all downstream products. One model regression can generate hundreds of customer escalations.

Competitive signal: Every quarter of elevated hallucination rates is a quarter in which competitors with stronger accuracy claims can make a credible case for switching. The hallucination narrative in media compounds this.

Evaluation infrastructure: Fixing this forces the organization to build hallucination-specific evaluation capabilities that do not currently exist. That infrastructure prevents the next regression from going undetected.

Success Definition

User-reported factual error rate down 30%, sustained for four consecutive weeks. Calibration score improving - the model’s expressed uncertainty should correlate more accurately with its actual accuracy. Zero model updates shipping without passing a hallucination-specific benchmark gate.

SUBSCRIBE NOW to get full access to in-depth breakdown of AI PM interview questions.

Step 4: Identify User Segments

ChatGPT serves meaningfully distinct user segments with different hallucination exposure, different harm profiles, and different expectations.

Keep reading with a 7-day free trial

Subscribe to Crack PM Interview to keep reading this post and get 7 days of free access to the full post archives.