How to Answer AI Product Improvement Questions | AI PM Interview Guide (2026)

Step by step guide on how to answer AI Product Improvement Questions asked in AI PM interviews at AI-First companies.

You are sitting across from your interviewer at a top AI company. They lean forward and ask:

→ “ChatGPT hallucinations have increased this quarter. How would you improve it?”

Your mind races.

Is this a model problem?

A UX problem?

A data problem?

Do you talk about prompt engineering? RAG? Fine-tuning?

Where do you even start?

This moment is where most PMs candidates freeze in AI PM interviews or fall flat.

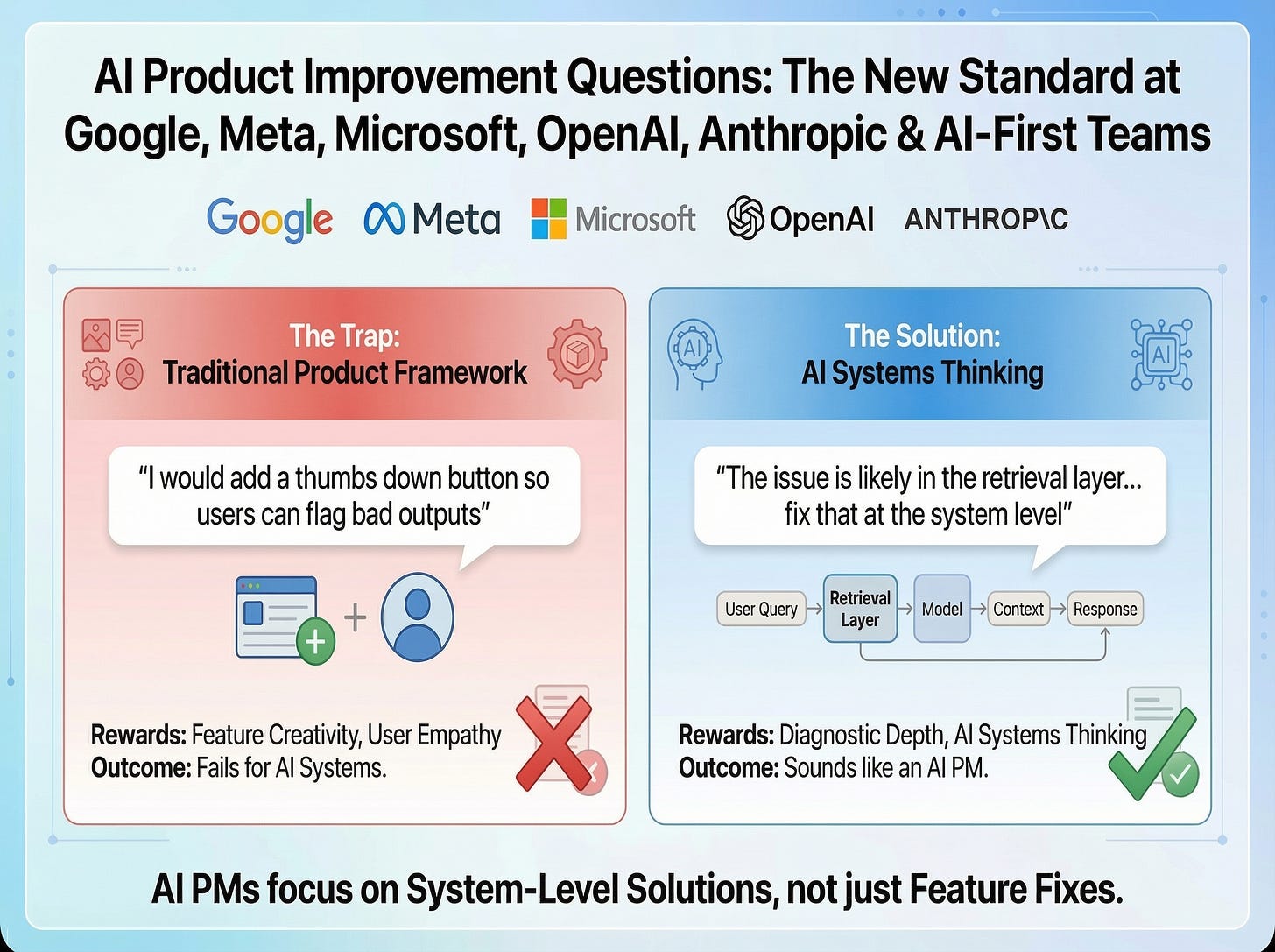

AI product improvement questions are becoming the new standard at companies like Google, Meta, Microsoft, OpenAI, Anthropic, and any product team that has shipped an AI-powered feature. And, the candidates who are failing these questions are not failing because they lack product sense. They are failing because they are applying a traditional product improvement framework to a fundamentally different type of system.

Classic product improvement question rewards feature creativity and user empathy (along with structured approach, of course). An AI product improvement question rewards diagnostic depth and AI systems thinking.

The candidate who says “I would add a thumbs down button so users can flag bad outputs” sounds thoughtful on the surface.

But the candidate who says “the issue is likely in the retrieval layer, the model is pulling irrelevant context before generating the response, and here is how I would fix that at the system level” sounds like an AI PM.

This article walks you through:

the complete framework to answer any AI product improvement question,

an example with every step completed,

the most common mistakes candidates make,

and, a set of practice questions to build your muscle memory.

BONUS: Infographic Cheatsheet for AI Product Improvement Questions

By the end, you will have a repeatable diagnostic process you can apply to any AI product, in any interview, under pressure.

Table of Contents

Why Interviewers Ask AI Product Improvement Questions?

Before diving into the framework, understand what interviewers are actually evaluating when they ask these questions.

1. AI System Thinking

Can you reason about how AI systems fail, not just how products fail? Interviewers want to see that you understand the mechanics of an AI product, not just its surface-level UX.

2. Structured Diagnosis

Do you find root causes before jumping to solutions?

The fastest way to fail this question is to start pitching features before you have diagnosed what is actually broken.

3. Layer Awareness

Do you know the difference between a model problem and a prompt problem? Between a retrieval failure and a training data gap?

Naming the right layer signals real depth.

4. Trade-off Reasoning

Can you weigh accuracy versus latency versus cost?

AI improvements almost always come with real trade-offs. Interviewers want to see that you understand them.

5. User Trust Sensitivity

Do you understand that AI failures erode trust in a way that a broken button does not?

When an AI gives a wrong answer, users do not just get frustrated. They stop believing the product. That is a different problem that requires a different solution.

6. Metric Depth

Can you define metrics that capture AI quality, not just engagement?

Saying “I would measure DAU” for an AI accuracy problem signals that you do not understand how to evaluate AI systems.

Remember: there is no single right answer to these questions. Interviewers care more about how you think than what you conclude. But for AI products, how you think must include system-level reasoning.

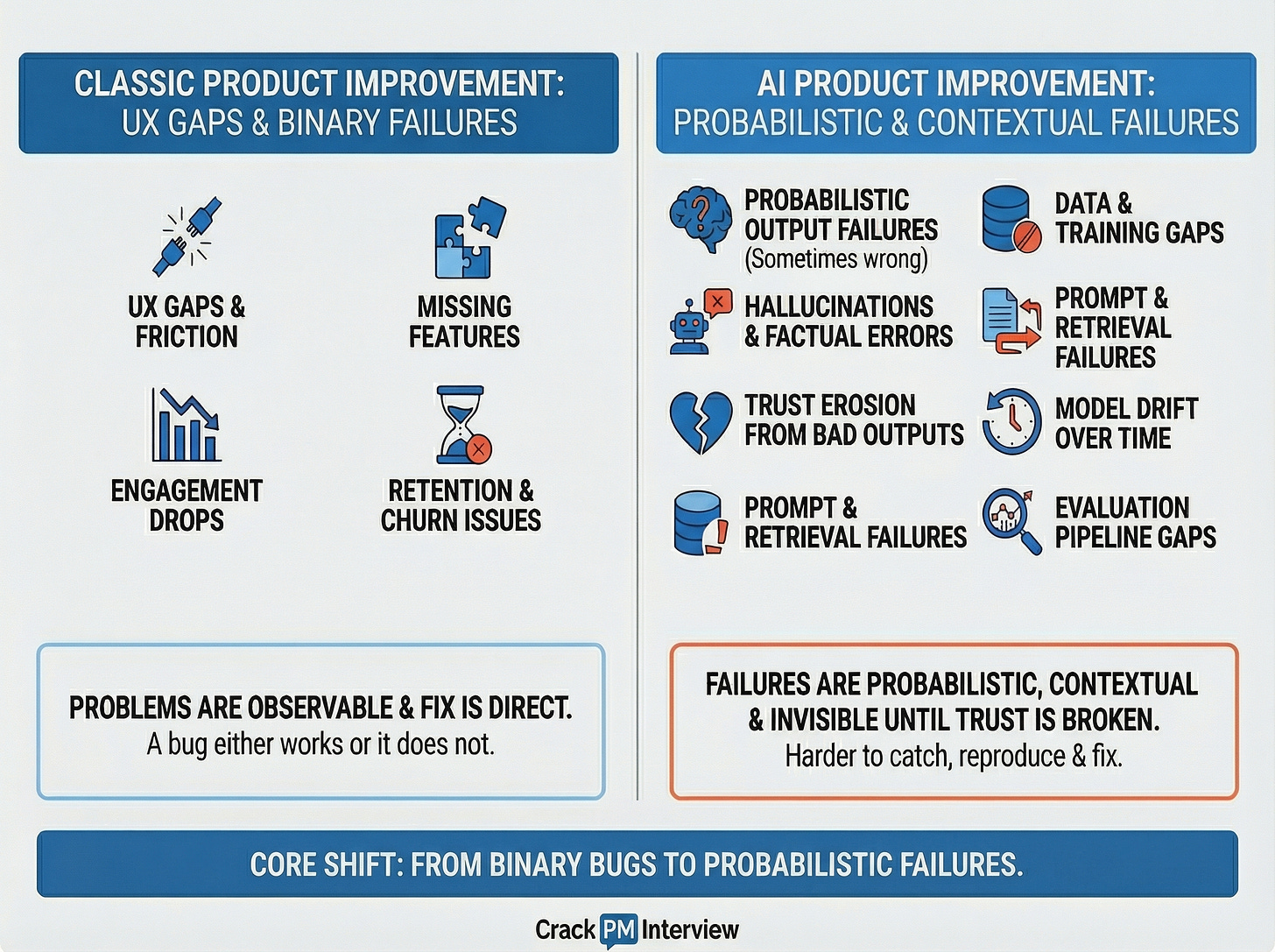

How AI Product Improvement Is Different From Classic Product Improvement?

To understand why the standard framework is not enough, you need to understand how AI systems fail differently.

Classic product improvement focuses on:

UX gaps and friction

Missing features

Engagement drops

Retention and churn issues

These problems are relatively straightforward to diagnose.

AI product improvement requires you to also diagnose:

Probabilistic output failures.

The system is sometimes wrong, not always wrong.

Hallucinations and factual errors.

The AI confidently states something that is false.

Trust erosion from bad outputs.

Once a user sees a wrong answer, they start doubting all answers.

Data and training gaps.

The model was trained on data that does not represent the current user context, causing systematic failures in specific domains.

Prompt and retrieval failures.

The way the AI is instructed, or the context it is given, is causing poor outputs even when the underlying model is capable.

Model drift over time.

The model that performed well six months ago now underperforms because the world has changed and the training data has not.

Evaluation pipeline gaps.

Nobody is systematically measuring whether the AI outputs are correct, so problems compound silently over time.

The core shift in thinking:

In a traditional product, a bug is binary. It either works or it does not. When a form submission fails, you know it failed. You can reproduce it. You can fix it.

In an AI product, failures are probabilistic, contextual, and often invisible to the user until trust is already broken. The model gives a wrong answer only when asked about a specific domain. It hallucinates only when the retrieved context is thin. It underperforms only for users in certain languages. These failures are harder to catch, harder to reproduce, and harder to fix.

How to Answer AI Product Improvement Questions?

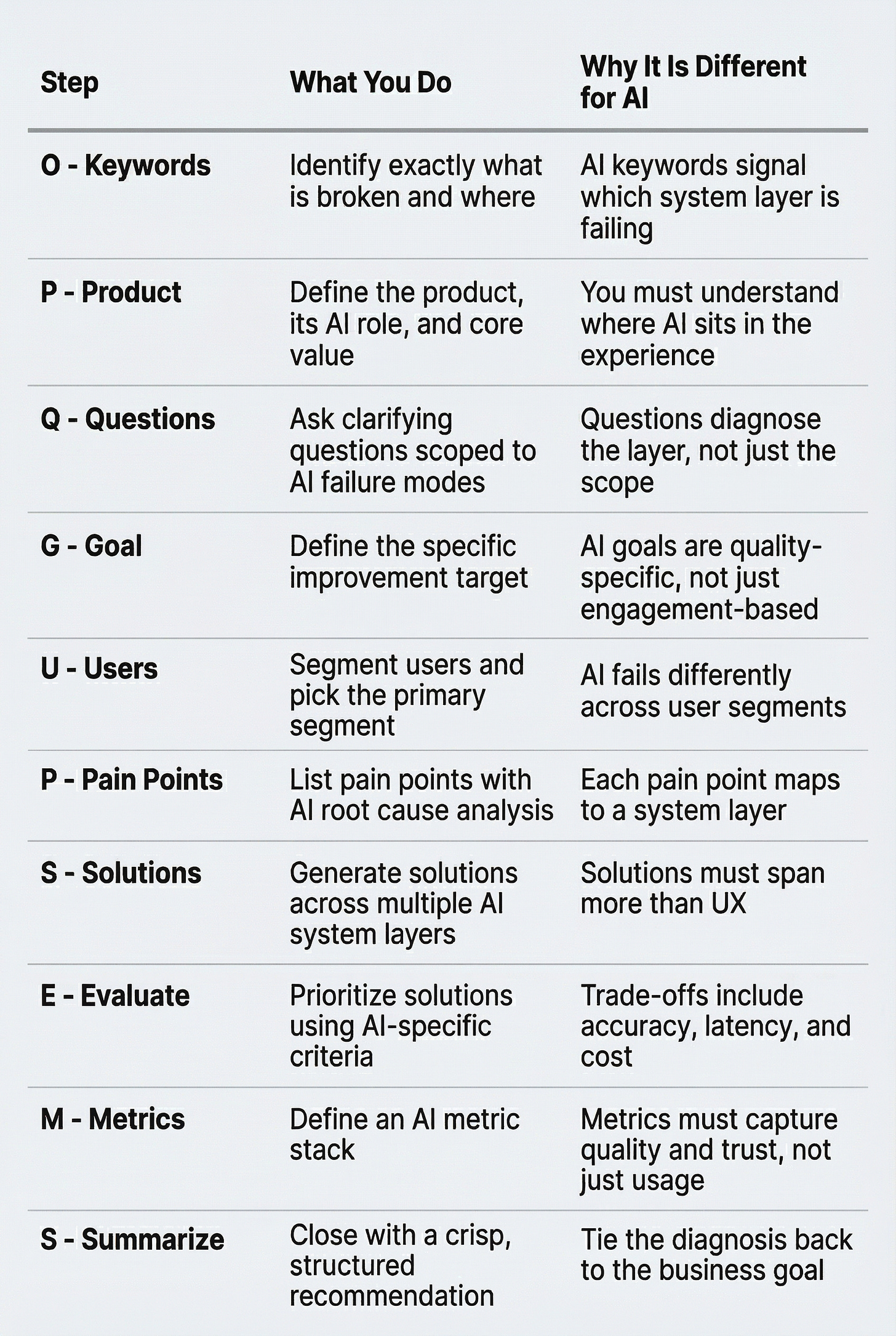

Here is a proven framework for answering any AI product improvement question. It builds on the classic PQ-GUP-SEMS structure and adds a critical AI diagnostic layer that most candidates miss.

PQ-GUP-SEMS for AI Products

Let’s break down each step.

Step 0: Listen for Keywords (The Hidden Step)

Before you say a single word, write down the exact question. This sounds obvious, but it is where many candidates go wrong.

Consider these two questions:

“How would you improve GitHub Copilot?”

“How would you improve the suggestion accuracy of GitHub Copilot for senior developers?”

The first is open-ended. The second has a specific failure mode (inaccurate suggestions), a specific layer implicated (model or retrieval), and a specific user segment (senior developers). Your entire answer changes based on those details.

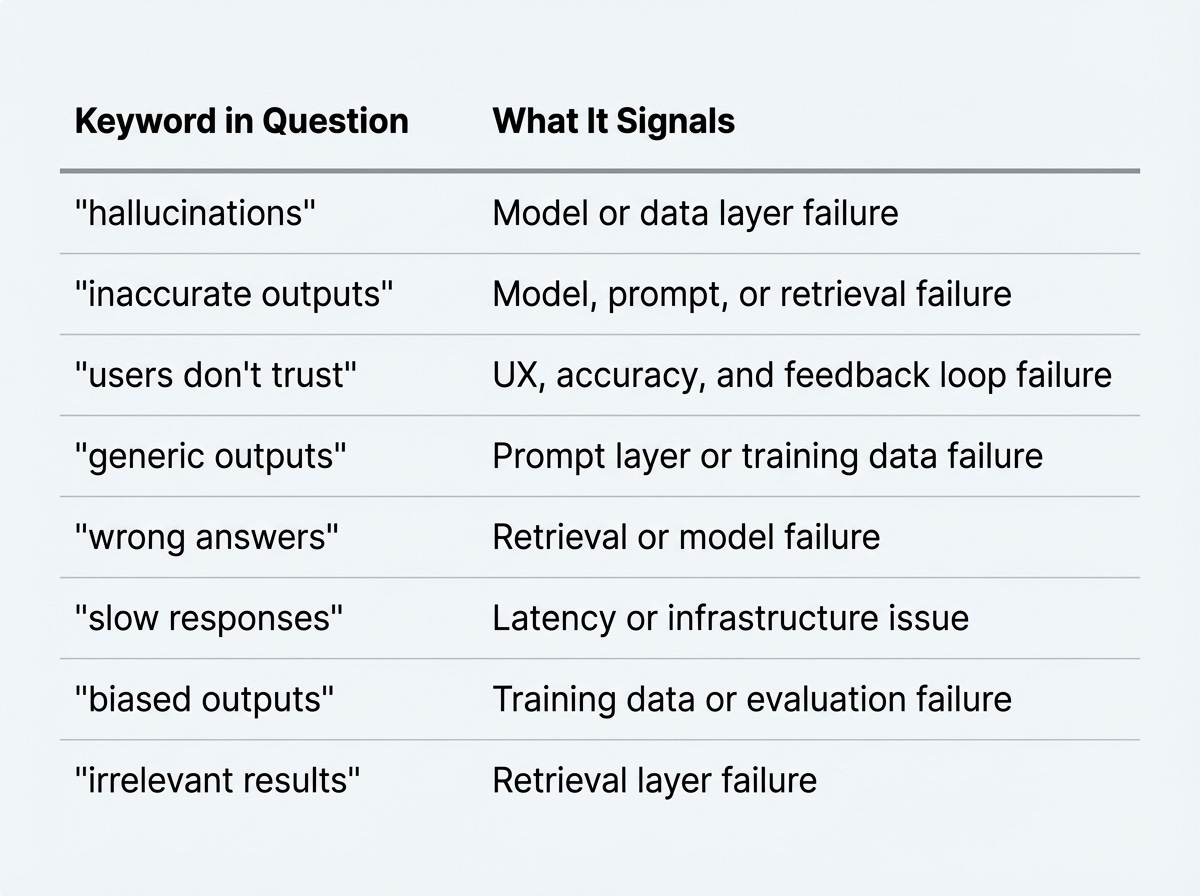

For AI products specifically, the keywords in the question are diagnostic signals. They tell you which layer of the AI system is likely broken before you even start your analysis.

AI-specific keyword signals to watch for:

💡 Interview Tip:

Always anchor your answer to the exact problem. Many candidates solve the wrong problem because they did not pay attention to the keywords. If the question says “hallucinations increased,” you are diagnosing an output quality problem, not a UX problem.

Step 1: Describe the Product

Start by demonstrating you understand both the product and the role AI plays in it. This is where AI product improvement questions diverge from classic ones. It is not enough to describe what the product does for users. You also need to describe what the AI does inside the product, and what breaks when the AI fails.

Cover these five elements:

What does the product do?

Who is it for?

Where does AI sit in the product experience?

What is the AI supposed to do for the user?

What breaks when the AI fails?

Example for a generic AI coding assistant for step 1:

“GitHub Copilot is an AI-powered coding assistant that suggests code completions and entire functions in real time as developers write. It serves developers ranging from beginners learning to code to senior engineers working on complex production systems.

The AI layer is the core of the product. It generates suggestions based on the surrounding code context, the file type, and patterns learned during training.

When the AI fails, the entire value proposition breaks down. Developers get wrong suggestions, waste time second-guessing the tool, and eventually stop using it altogether.”

Notice the difference from a classic product description. This version identifies where AI sits, what it is supposed to do, and what the downstream consequence of AI failure is.

That last element is critical. It connects the AI system behaviour to the user outcome and to the business impact.

Pro tip:

If you are genuinely unfamiliar with the product, ask the interviewer for a brief description before you proceed. Most interviewers will help. Then confirm your understanding before moving forward: “Does this align with how you see the product?”

Step 2: Ask Clarifying Questions

Never assume you know the full scope of the problem. Define every relevant dimension before you dive into analysis.

For AI products, clarifying questions serve a dual purpose. They narrow the scope, just like in any product improvement question. But they also diagnose the failure. The answers tell you which layer of the AI system to investigate first.

Five essential clarifying questions for any AI product improvement question:

1. What specific metric has dropped or is underperforming?

This tells you whether the problem is objectively measured (accuracy rate, acceptance rate) or subjectively reported (user complaints, NPS). An objective metric drop often points to a model or data change. A spike in user complaints often points to a UX or trust issue.

2. Which user segment is most affected?

AI systems fail differently across user types. A problem concentrated in one segment often points to a data gap. If senior developers are the only ones complaining, the model may lack sufficient training data on advanced patterns. If all segments are affected equally, the issue is more likely at the model or prompt layer.

3. When did the issue start? Was there a recent model update or data pipeline change?

Timing is one of the strongest diagnostic signals in AI systems. A problem that appeared after a model update points directly to the model or fine-tuning layer. A gradual degradation over months often points to model drift or training data becoming stale.

4. Are we measuring this through automated evaluation or user-reported feedback?

This tells you how reliable the signal is. Automated evals are precise but may not capture what users actually care about. User-reported feedback is noisy but reflects real experience. The measurement method shapes which solutions make sense.

5. Is this happening across all use cases or in specific contexts?

If the problem is universal, it is likely a model or prompt issue. If it is specific to certain domains, languages, or query types, it is more likely a retrieval or training data gap.

💡 Interview Tip:

For AI products, clarifying questions are not just about scope. They are diagnostic. The answers tell you which layer of the AI system to investigate first. A good interviewer will notice that your questions are pointed, not generic.

Step 3: Define Your Goal

If the interviewer has not specified a goal, you need to pick one and justify it clearly. The goal anchors everything that follows. It determines which pain points matter, which solutions are relevant, and which metrics you will track.

For AI products, goals are more specific than “improve engagement.” They are tied to output quality, user trust, or task success.

Common AI product improvement goals:

Keep reading with a 7-day free trial

Subscribe to Crack PM Interview to keep reading this post and get 7 days of free access to the full post archives.